MoonJeong Park

I am a Ph.D. student at the POSTECH Machine Learning Lab, under the guidance of Prof. Dongwoo Kim, within the Graduate School of Artificial Intelligence at POSTECH.

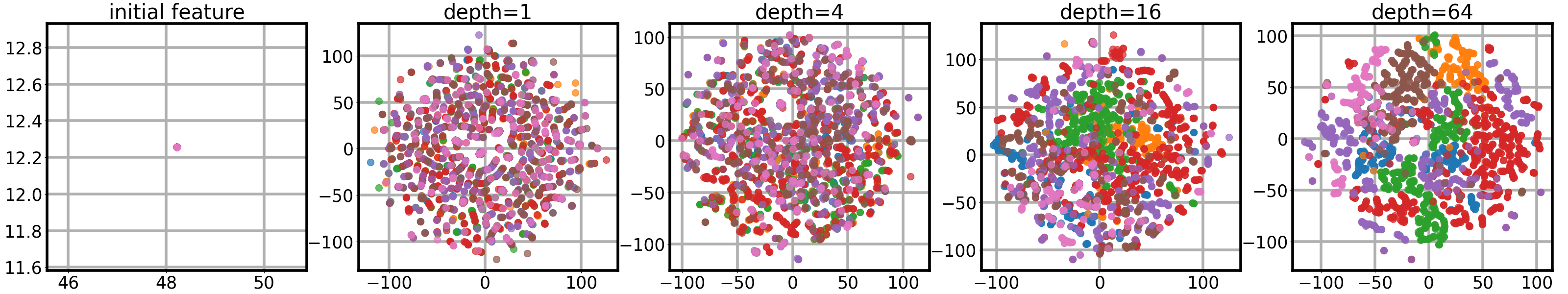

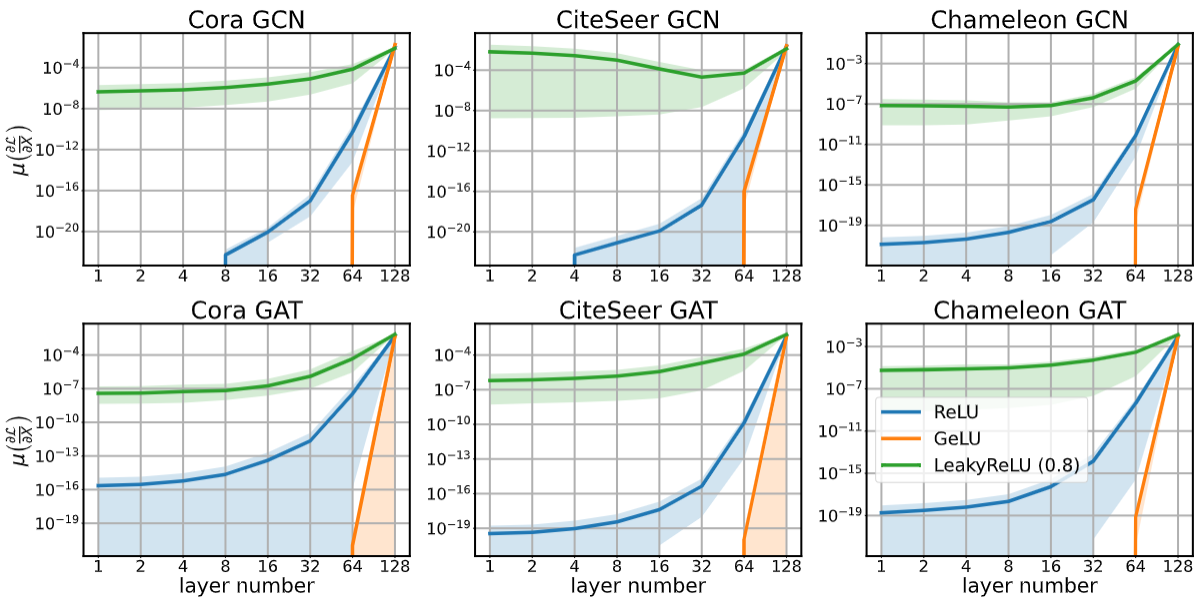

My research interest lies in various aspects of machine learning, particularly focusing on the theoretical analysis of neural network architectures and their implicit biases. Specifically, my recent work focuses on the theoretical impact of GNN architectures on their generalization error and optimization landscapes. Furthermore, I am broadening my research scope to include Transformer architectures, with a focus on generalizing my findings to a wider range of neural systems.

I’m actively seeking opportunities for meaningful collaborations and internships. If you find my work interesting or have any questions, please don’t hesitate to reach out.

News

| Mar 25, 2026 | 🇦🇺 Excited to be visiting the Computational Media Lab at ANU as a visiting researcher! (March – June 2026) |

|---|---|

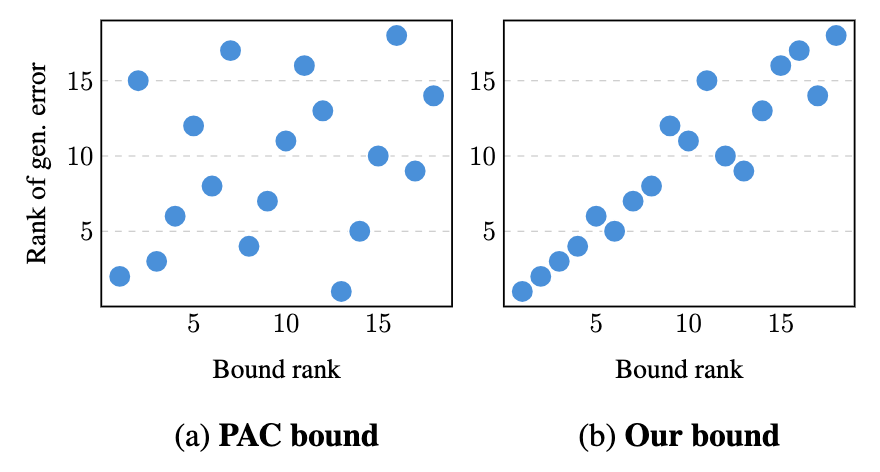

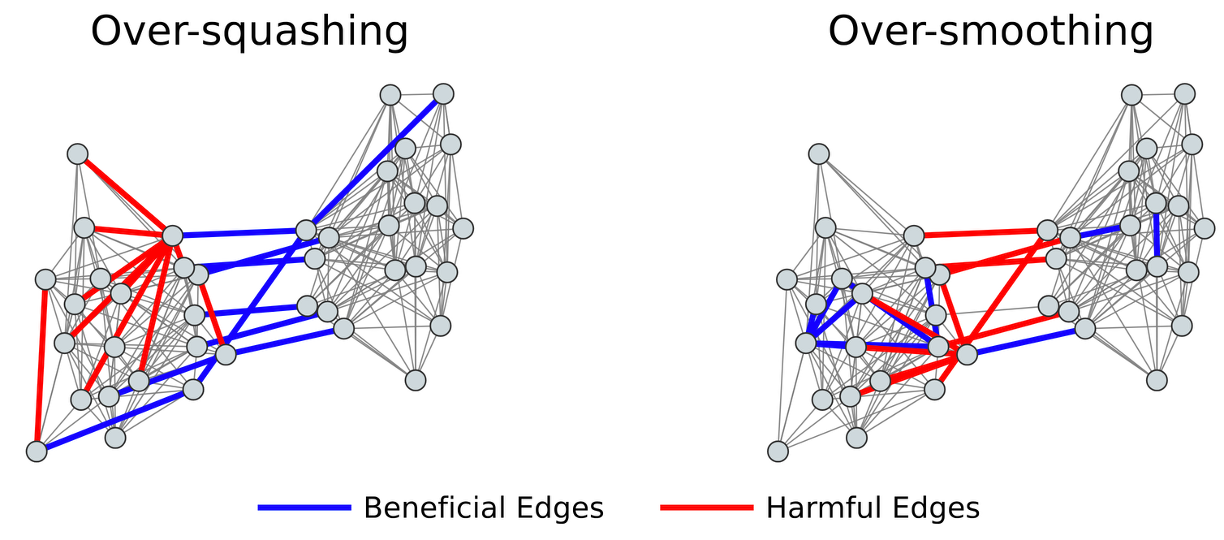

| Mar 11, 2026 | 📚 Check out our latest preprint on arXiv, where we propose practically applicable transductive generalization bounds for GNNs. |

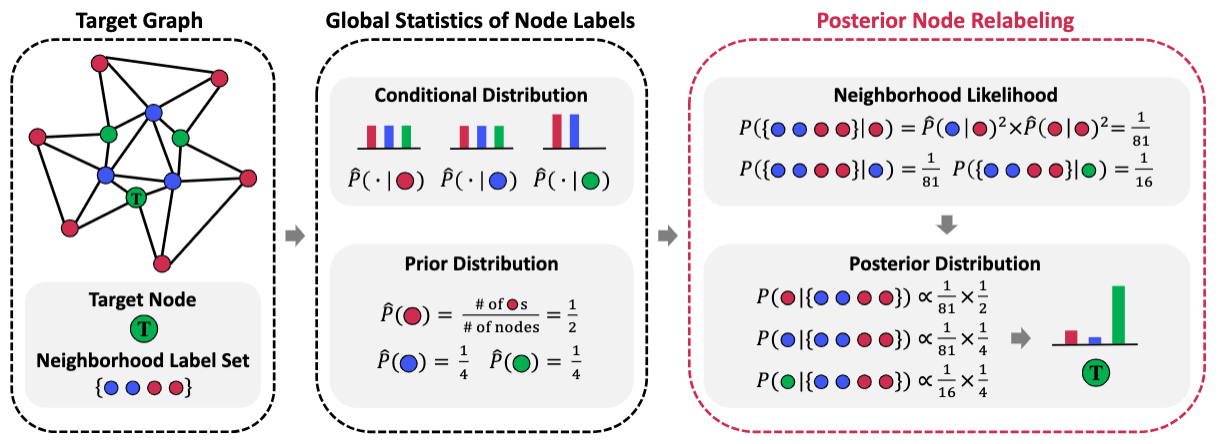

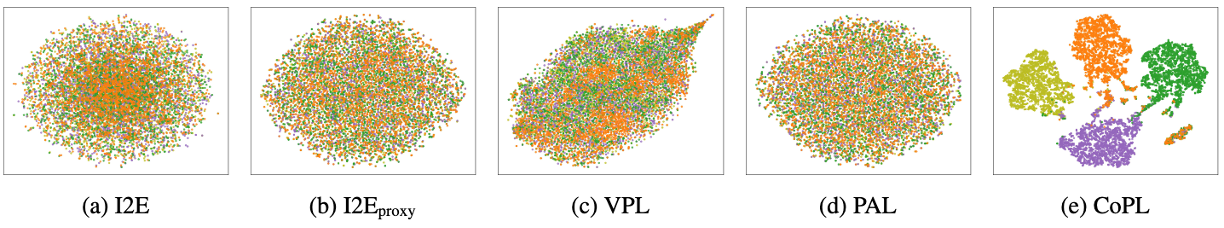

| Nov 8, 2025 | 🏆 A paper about label smoothing in GNN is accepted to AAAI 2026 as oral presentation! |

| Sep 18, 2025 | 🎉 A paper suggests influence fuction for GNNs accepted to NeurIPS 2025! |

| Aug 21, 2025 | 🎉 A paper about Personalizing LLMs with graph-based collaborative filtering framework is accepted to EMNLP 2025! |

Education

| Sep, 2022 - Present | Pohang University of Science and Technology (POSTECH), Pohang, South Korea Ph.D. student in Computer Science and Engineering Advisor: Dongwoo Kim |

|---|---|

| Sep, 2019 - Sep, 2022 | Pohang University of Science and Technology (POSTECH), Pohang, South Korea Integrated M.S. student in Computer Science and Engineering Advisor: Dongwoo Kim |

| Mar, 2014 - Sep, 2019 | Daegu Gyeongbuk Institute of Science and Technology (DGIST), Daegu, South Korea B.S. in School of Undergraduate Studies |

Experience

| March, 2026 - Present | Computational Media Lab at Australian National University, Canberra, Australia Visiting Researcher

|

|---|---|

| August, 2020 - June, 2022 | POSCO AI education program, Pohang, South Korea Teaching Assistant

|

| June, 2018 - January, 2019 | Data Mining Lab at Seoul National University, Seoul, South Korea Research Intern

|

| June, 2016 - July, 2016 | Multi-Scale Robotics Lab at Eidgenössische Technische Hochschule Zürich, Zürich, Switzerland Research Intern

|

Publications

* indicates equal contribution.

Honors and Awards

BK21 Best Paper Award, POSTECH AIGS (2024)

|

Best Paper Award, IEEE BigComp (2021)

|